Machine Learning Saves Time & Reduces Errors for Medical Coding with 1 App for 4 OSs

Challenge

CPS wanted to enable physician to conduct secure remote and virtual appointments using existing applications.

Solution

ClearScale used various AWS services, including Amazon Connect and AWS Lambda, to add HIPAA-compliant telemedicine capabilities to CPS’s IT environment.

Benefits

CPS physician users can now connect directly to individual patients through their personal devices in order to conduct medical appointments at a distance.

AWS Services

Amazon Connect, Amazon AppStream 2.0, AWS Lambda, AWS Fargate

Executive Summary

Creative Practice Solutions provides business consulting services to medical professionals, helping them optimize their practices and the services they deliver. One of their areas of focus is the medical coding process, which is complicated, time-consuming, and often prone to errors.

Working with ClearScale and AWS, the company developed an application that uses artificial intelligence (AI), machine learning (ML), and deep learning (DL) to help overcome those issues — increasing both the efficiency and accuracy of the medical coding process. The app also works on four different operating systems while using a single code base, in essence, delivering four apps for the price of one.

The Challenge

Among the biggest challenges in developing the medical coding app were the coding and speech transcription processes.

Medical coding staff charged with assigning medical codes and using them for billing purposes rely on dictated notes created by medical professionals after patient visits. Proper medical coding requires listening to recordings, deciphering diagnoses and matching them to procedures codes drawn from numerous code systems, applying complex medical billing rules, and much more.

Numerous software programs have been developed to ease the burden through the use of macros and automation; however, these programs typically fall short. Other factors further contribute to the problems including: a) Rules for bundling services within a single code; and b) misinterpretation of or inability to decipher notes.

Transcribing voice recordings to text helps, but comes with its own challenges, including:

- numerous pronunciation and spelling variances

- issues with voice activity detection

- terminology differences when more than one language is involved which can affect voice-to-text translation accuracy

While the use of AI and ML can improve translation results, there are still issues associated with voice activity detection, language and pronunciation differences, and a variety of other issues that affect the overall outcome. Also, few companies have been able to leverage these technologies in a way that is cost-efficient and meets other criteria such as the need for HIPAA compliance.

An additional challenge was presented by Creative Practice Solutions’ desire to make the app available across multiple devices and platforms — and at the lowest cost possible. The UX needed to be the same regardless of the device, and it was essential to keep the device features in a parity state across apps.

Until recently, the only option for doing that was cross-platform development using frameworks such as Electron, React Native, Flutter, Xamarin, and others. However, cross-platform apps don’t integrate flawlessly with their target operating systems. As such, these frameworks don’t always perform optimally due to inconsistent communication between the non-native code and the device’s native components.

The Medical Billing Coding Process Solution

ClearScale’s solution offers a HIPAA-compliant way to optimize coding productivity and the accuracy of medical coding and billing processes. It’s also cost-effective by virtue of employing practically the same code base to work across four different operating systems.

The solution uses serverless architecture based on AWS services. AWS Step Functions, a managed service, coordinates the multiple AWS services into serverless workflows. The service is well suited to crafting long-running workflows such as ML model training, as well as for building high-volume, short-duration workflows.

The process starts with a medical professional recording medical appointment notes, typically on a mobile device. The user has the ability to review and edit the notes if needed.

The app uploads the recorded notes to an Amazon Simple Storage Service (S3) object storage bucket. This triggers an AWS Lambda function to start the processing cycle. Lambda allows for running code without provisioning or managing servers.

The cycle starts with an Amazon Transcribe service job. Amazon Transcribe uses a deep learning process called automatic speech recognition (ASR) to quickly convert speech to text. Voice activity detection (VAD) algorithms are used to help differentiate human speech from background noise.

The transcription file is then moved from transitional internal AWS secure storage to the separate raw-transcripts S3 bucket. This triggers another Lambda function, which downloads the transcription and extracts raw text from it.

Another function determines if the text is in English. If so, it’s uploaded as a JSON file to the English-transcripts S3 bucket. If it’s Spanish, Amazon Translate uses deep learning models to create an accurate translation. The data is then uploaded as a JSON file to the English-transcripts S3 bucket.

Yet another Lambda function is triggered to download the JSON file. From there, Amazon Comprehend Medical, a natural language processing service, extracts relevant medical information, such as medical codes, from unstructured text.

The results are analyzed, and a medical note created and saved to an Amazon DocumentDB database. A Lambda function then sends a push notification to all user application instances to reload medical notes.

The solution incorporates features to enhance accessibility. For example, it employs Apple’s VoiceOver gesture-based screen reader for vision accessibility. Because every individual has his or her own “voice preset” covering things such as pitch and sound frequency, the app includes the ability to change the VAD frequency for each user. The app can also have variable settings for the amount of time no speech is detected, so recording can be stopped.

Two different kinds of search functions are incorporated as well: predicate-based, which requires an exact match of some part of the word and fuzzy search, which is more heuristic-based. The latter is useful for cases where it is hard to remember the exact wording when conducting a search, or it is faster to search for a patient’s name based on initials.

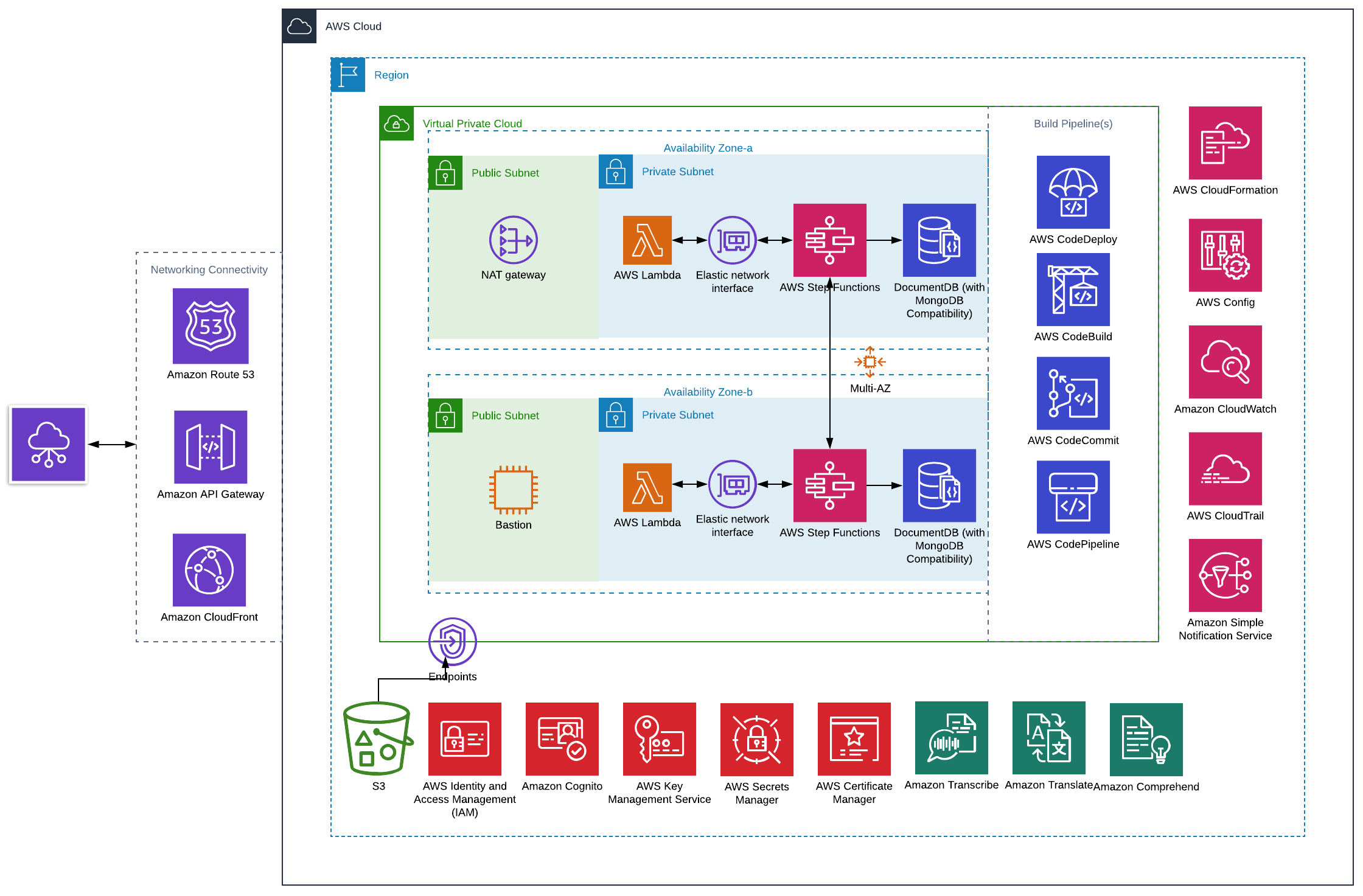

Architecture Diagram

Read more about the solution here: https://blog.clearscale.com/using-aws-ml-and-ai-for-smarter-medical-coding/

The Multiple Platform Solution

ClearScale also took advantage of several recent developments from Apple to enable the app to work across multiple platforms with no change to the code base. That included the September 2019 release of Catalyst, which makes it easy to make apps developed natively for iOS devices and run them natively on Macs. Catalyst apps are built using the iOS 13 software development kit (SDK), with relatively few changes to the API set.

The Catalyst includes the Apple UIKit framework, which provides the required infrastructure for iOS or tvOS apps. UIKit unifies the substrate layer between iOS and macOS so iOS apps can run on the macOS. The apps maintain the same design and functionality as on iOS devices and iPads, but the UX and UI are adapted to match the macOS design patterns.

Apple also split its iOS into iOS 13 for iPhone and iPod Touch and iPadOS for iPad. This allows for taking advantage of enhancements specific to each device, including features that make sense for the iPad’s bigger displays and hardware.

The ClearScale solution employs a modular architecture with the business logic components (without UI/UX) separated to the base framework with two targets — UIKit and UIKit-tvOS. AWS SDK was used for the Mac Catalyst and tvOS targets.

The different UI capabilities required augmenting the support of the drop-in AWS authentication. Because AWS provides Cognito Advanced Security features as a compiled library, the library had to be disabled on macOS and tvOS.

Properly adapting the AWS SDK required using preprocessor macros for C/C++/Objective-C code because AWS SDK is written using low-level APIs and pre-Swift Apple language. Linker and build scripts were used to build the app on tvOS in the usual way. The UI/UX logic was divided between UIKit and UIKit-tvOS executables.

To achieve user interaction parity, ClearScale used conditional compilation, which allows the compiler to produce differences in the executable program produced and controlled by parameters that are provided during compilation.

This allowed ClearScale to use code dedicated to the specific platform in the source files for all platforms. Doing so enabled the addition of support for file picker on macOS and keyboard support for macOS and iPadOS. In the future, this will allow for other enhancements such as adding PencilKit support for iPadOS.

The architecture will also allow for providing Apple Watch support in the future. Currently, a different UI engine, WatchKit, is required. However, the business logic created will remain the same, which could save between 70-80% of the time needed for development.

Read more about the solution here: https://www.clearscale.com/blog/native-app-development-one-codebase-four-platforms/

The Results

ClearScale is currently working with Creative Practice Solutions in the next phase of the app’s development. That includes an enhanced cloud medical billing POC and client application user experience POC.

With many more features in the works, the initial app has already demonstrated numerous valuable benefits.

- Flexibility. The single app works across multiple devices using any of four different operating systems using a single codebase, essentially yielding four apps for the price of one.

- Reduced costs. The use of AWS services also helps reduce costs, as demonstrated by Amazon Comprehend Medical. Creative Practice Solutions is only charged based on the amount of text processed on a monthly basis in particular, and the user base in general.

- Reduced management time. The use of various AWS managed services reduces administration and management time — and subsequently costs. With Amazon Lambda, for example, code can be run for virtually any type of app or backend service with zero administration.

- Accuracy. The solution delivers a more accurate translation of speech to text because background noise is eliminated, and there’s no need to recognize different speakers.

- English and Spanish. It has the capability of recognizing both US Spanish and US English and translating US Spanish to US English.

- HIPAA compliance. The solution is architected for HIPAA compliance with built-in security controls and the use of HIPAA compliant services such as Amazon Medical Comprehend. It also incorporates security and data privacy best practices. That includes encryption at rest and in transit, audit trails, and multi-factor authentication.

- Export options. Medical notes can be exported using various formats — PDF, XML, JSON, PLIST, and HTML — for compatibility with a variety of systems.

- Accessibility. The solution incorporates numerous accessibility enhancements to make usage easier for a broader audience (e.g., VoiceOver, Assistive Touch, Dynamic Fonts, etc.).

This is just the beginning of what the app is poised to do as ClearScale, AWS, and Creative Practice Solutions continue to explore, develop, and test additional capabilities and features.